How to use Google Gemma 4 locally with llama.cpp

Similar to Ollama, you can also use llama.cpp to run LLM in your machine as well.

Getting Started

If you’re on macOS, you can install llama.cpp using brew like this

brew install llama.cpp

Running the LLM

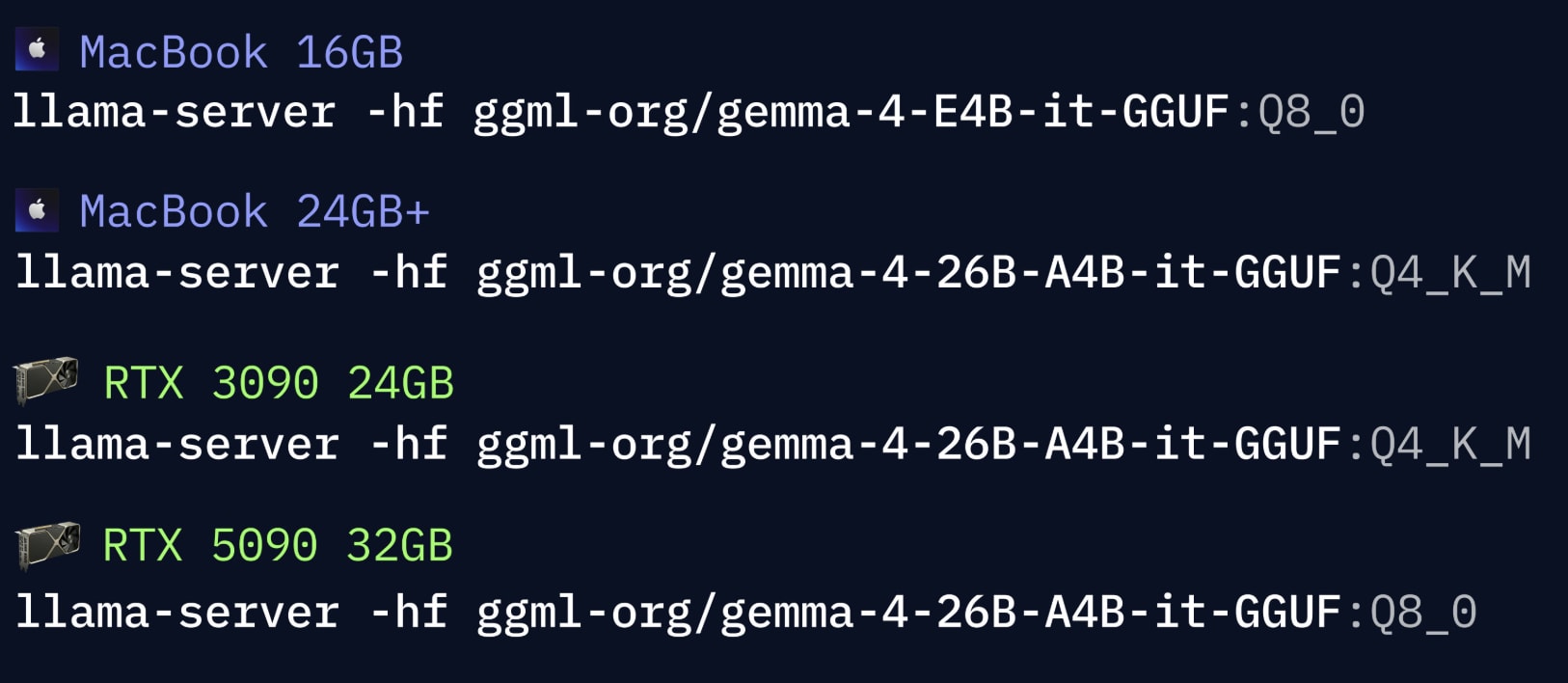

Depending on the machine, you might want to run appropriate LLM

llama-server -hf ggml-org/gemma-4-E4B-it-GGUF:Q8_0