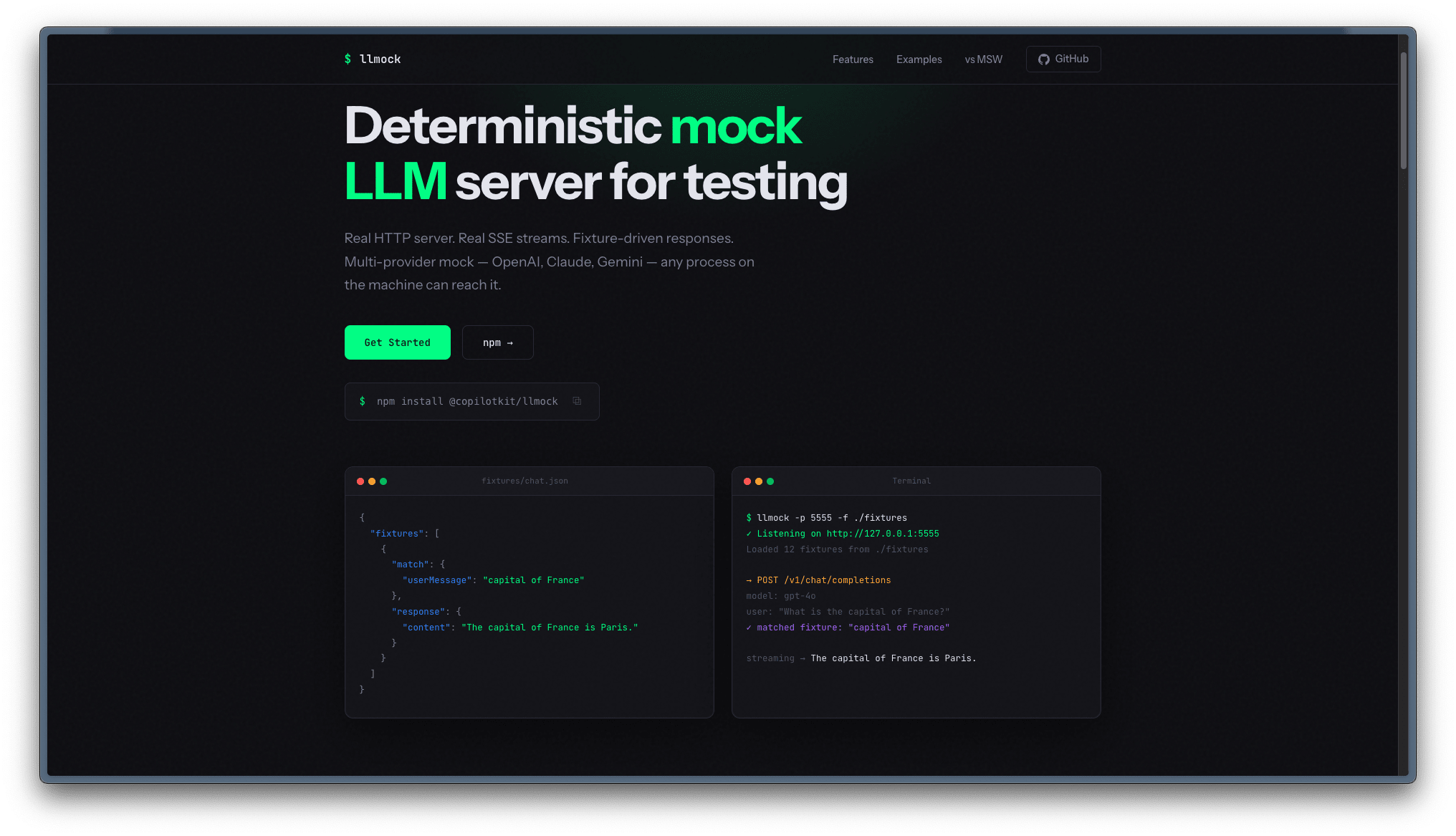

Mocking LLM calls with llmock

llmock allows you to mock the LLM inference. You just need to point your base server to it and rest of the things will work as expected.

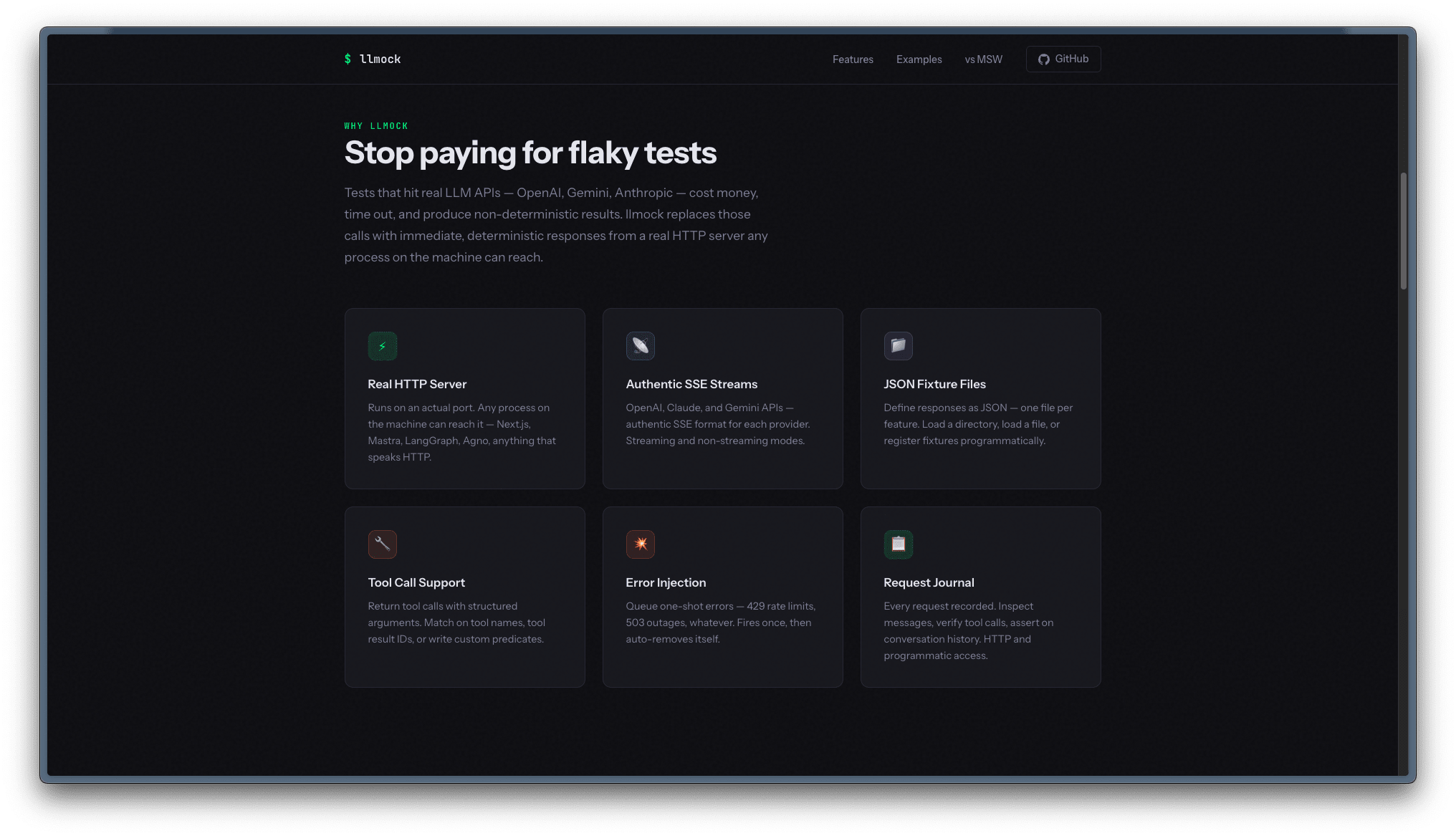

This is especially useful if you’ve some sort of testing where you do not need to hit the actual LLM API.

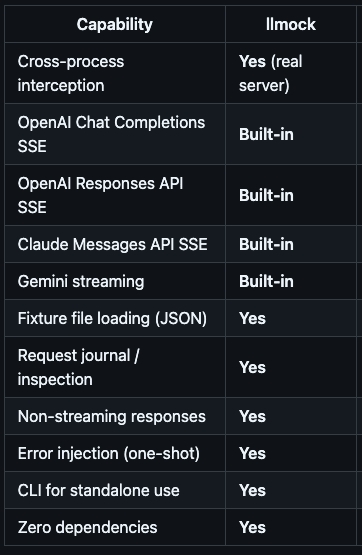

It has support for all the inference format like OpenAI chat completion, responses message type, Anthropic, etc

And has wide range of features as well